In providing survey research services to new clients we often come across cynicism when it comes to research statistics and honestly, I can’t blame them. With the availability and wide use of free survey software unfortunately that means there are a lot more people that do not have a solid background in research that are out there collecting information without understanding exactly what they are doing. I applaud them for trying to make a go of it and some actually succeed, but the reality is that the majority of these studies create inaccurate statistics and bad data to feed the fire of doubt among the cynics.

Fortunately, a little basic knowledge goes a long way!

At our company we embrace the DIY future of survey research and we know that free tools are here to stay. Our solution is to help each organization one at a time to help them discover a process they can use to conduct research so they can depend more on their results and stop the spread of unintended misinformation. One of the basic pieces of knowledge often missing from the DIY researcher equation is the number of responses they need to get predictive results. I’ve been seeing this almost everywhere I go lately whether it be within conference presentations, blogs, newscasts, or webcasts. What I see are research results presented as “facts” that are based on either too few responses, or presented without references to any population sizes at all leaving me to wonder how many people contributed to these facts.

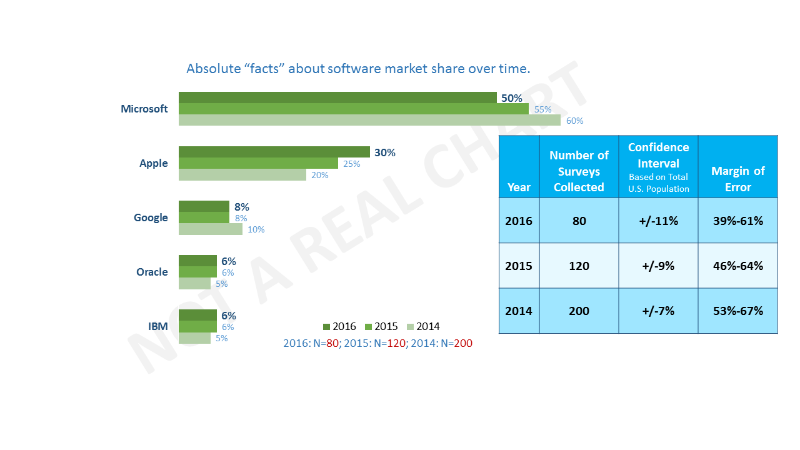

So, in an effort for this basic knowledge to reach as many people as possible and spread to the highest hills we’re going to keep this real simple and also give you a tool to help when calculating the number of people you need in your study in order to have predictive results. Below is a chart with some of my very own made up data in green followed by an explanation in blue of how predictive these numbers actually are based on the number of surveys collected (see red numbers below chart).

The way you get the margin of error figure is by using a sample calculator. Here is a link to one that you can use courtesy of beautiful Australia: Sample Calculator. What a sample calculator does is tell you how predictive a subset of a larger population is. Given the example above if you were to take the entire U.S. Population according to the latest, complete census figures (319,000,000) and enter that into the population size box, designate the proportion as .5 for a conservative estimate, then enter in 80 into the sample size box and hit calculate you get a confidence interval of 11%. Now that 11% is the upper and lower range that you can be sure that the percentage represents (also known as the margin of error). So if you conduct this study again with another group of 80 similar people you can be 95% sure that your answer for Microsoft in 2016 is between 39 and 61 percent. Not very good, is it? For that matter, neither is the 2015 data or the 2014 data. The bottom line is that I would not invest my fictitious millions on anything here.

Now using that same sample calculator, replace 80 with 400 and hit calculate. How does being plus or minus 5% sound? Much better, don’t you think? Now you can be 95% sure that your results will be between 45 and 55 percent with a similar group. This is the number of responses I recommend to clients to start with, with the optimal number of responses being 1,000 (+/-3%). Above that, you will need to collect another thousand responses to get to +/-2% and a total of 8000 to get to +/-1%.

If getting thousands of responses is easy and free for you, you should do it! Most organizations don’t have access to those kind of engaged email contacts nor do they have the budget to afford the cost per complete on larger studies.

Now for the love of all things survey, please share this with anyone and everyone you know that is intending to conduct something on their own. The world needs more good information. If anything is unclear here, just let me know in the comments section or send me an email at: nate@growthsurveysystems.com . I’m always happy to help.

-Nate Laban